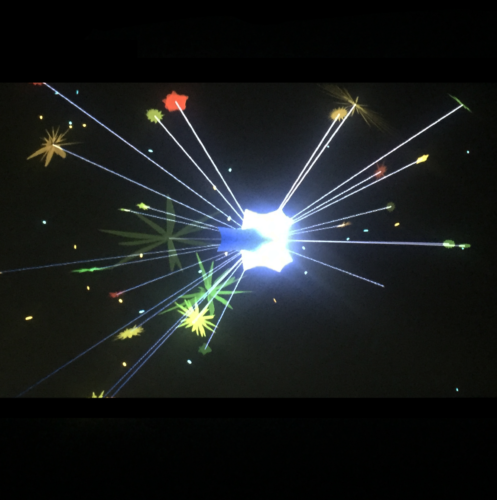

Sparkle

by: Haruna Sawai

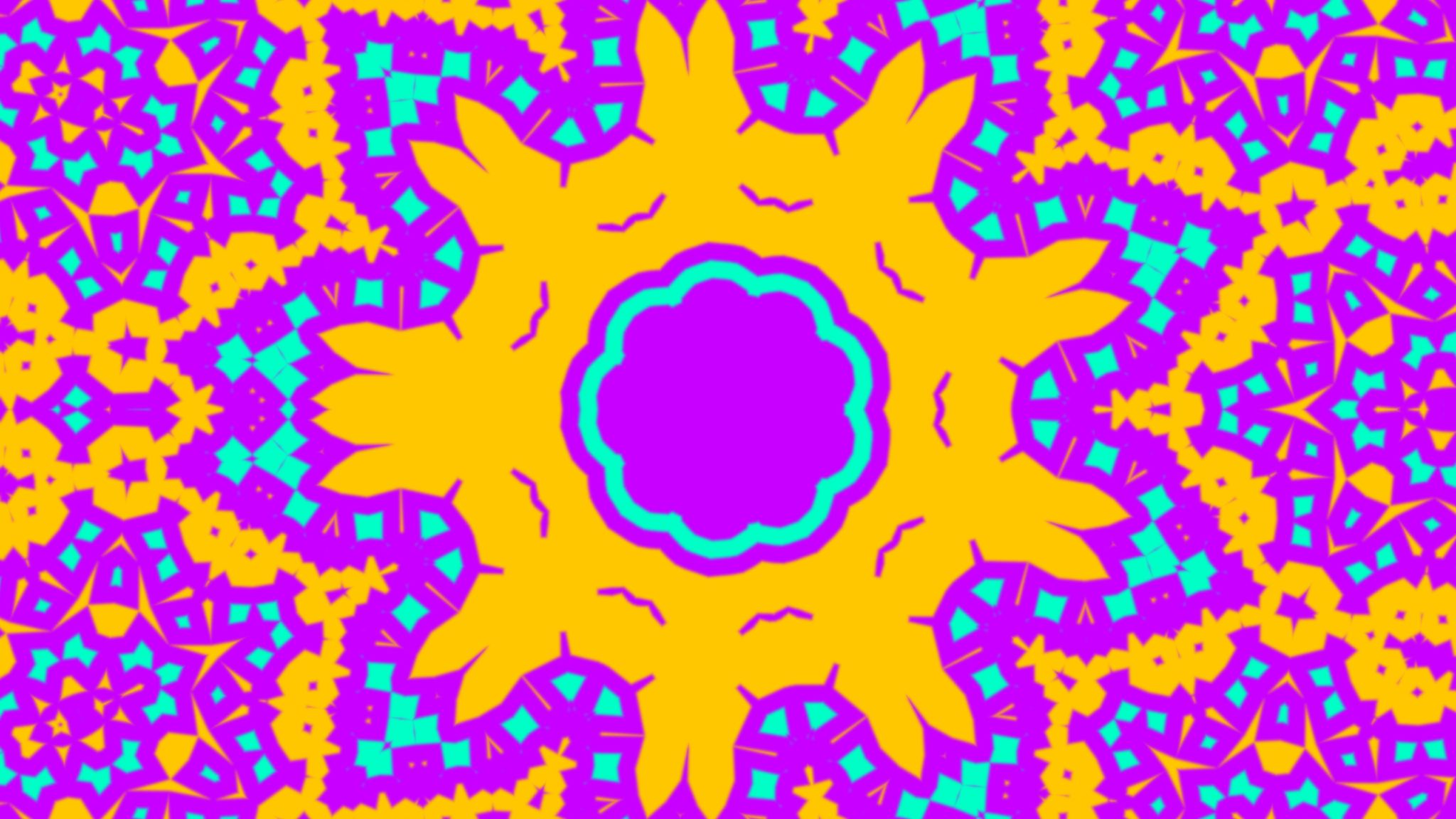

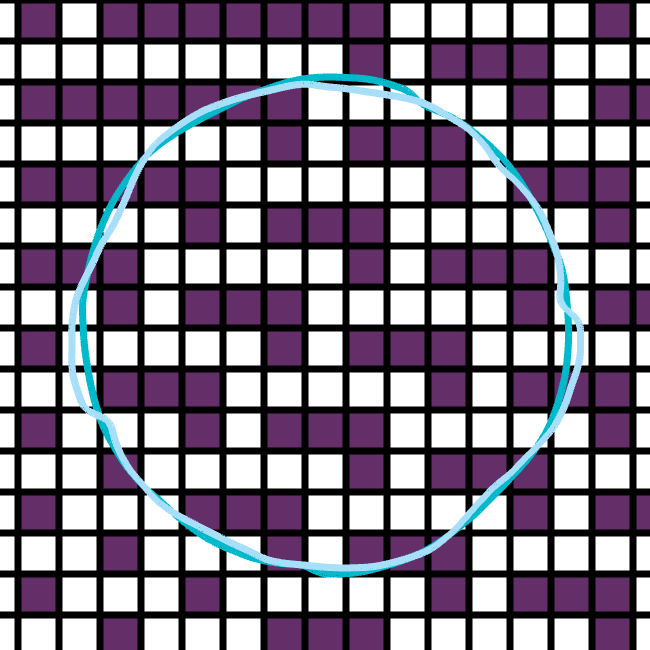

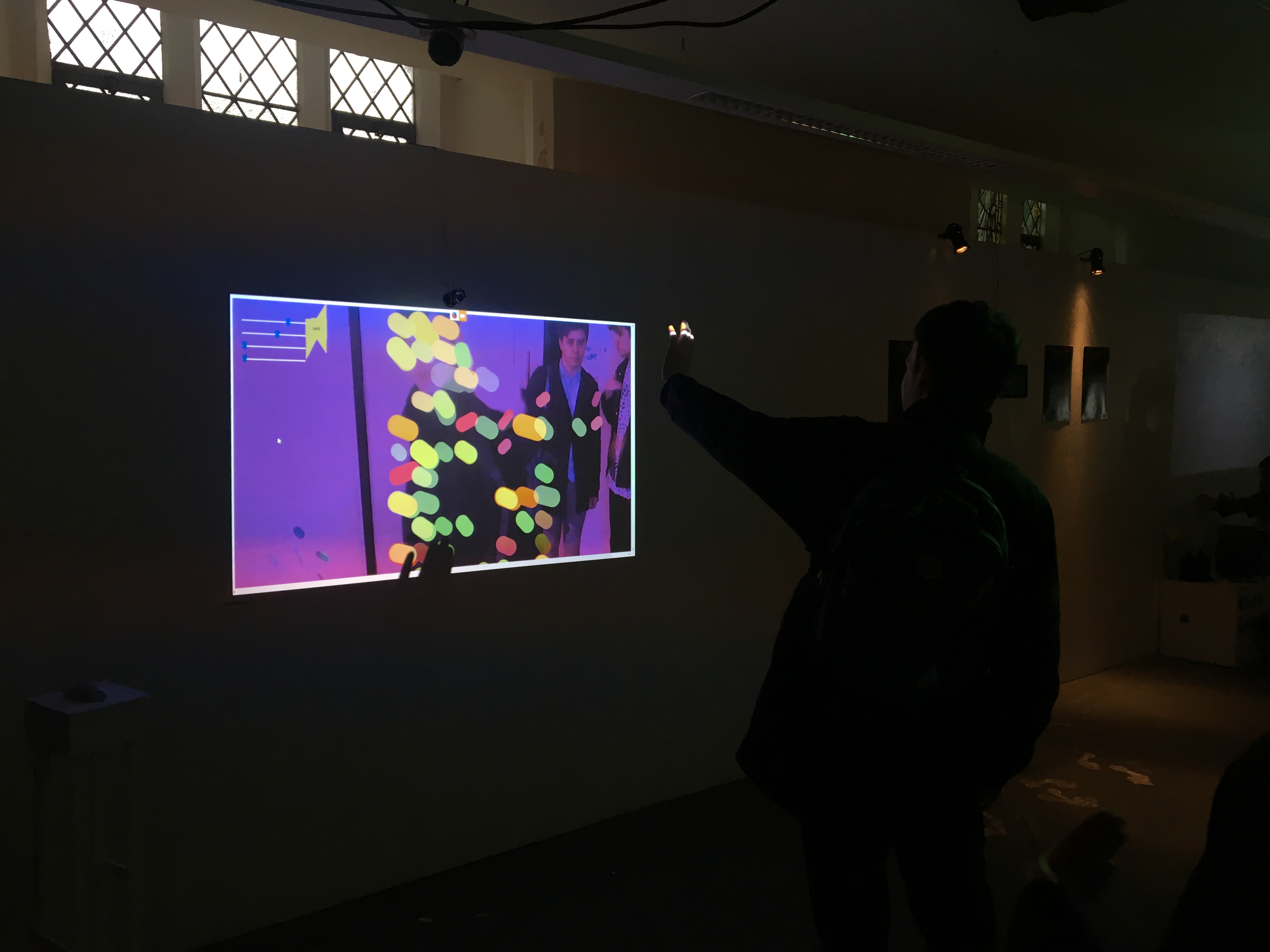

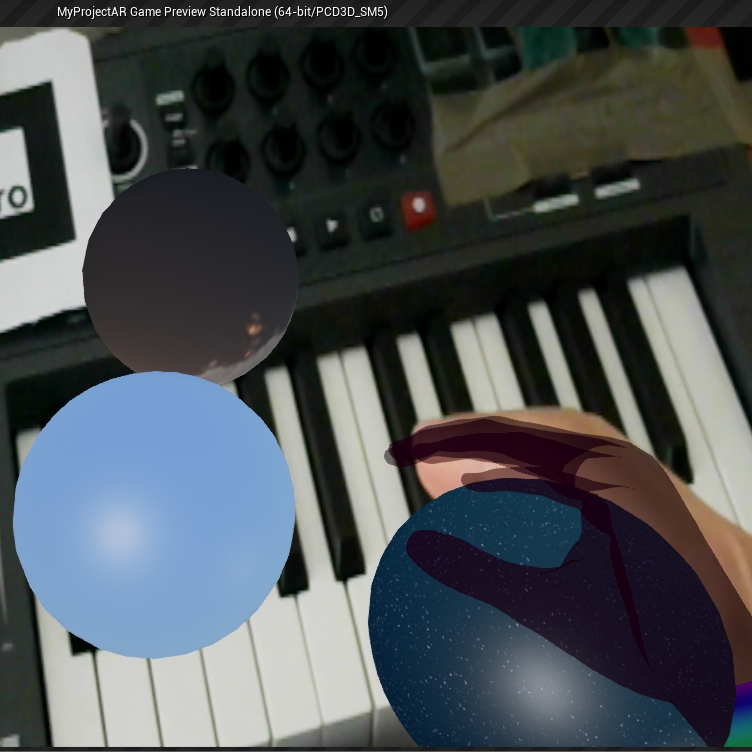

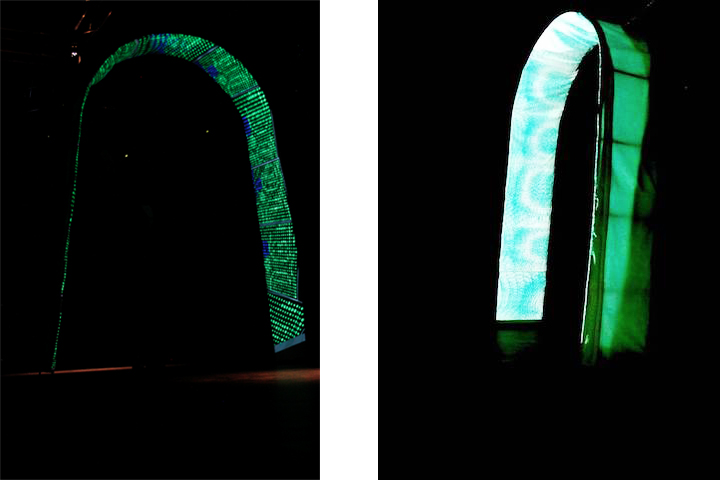

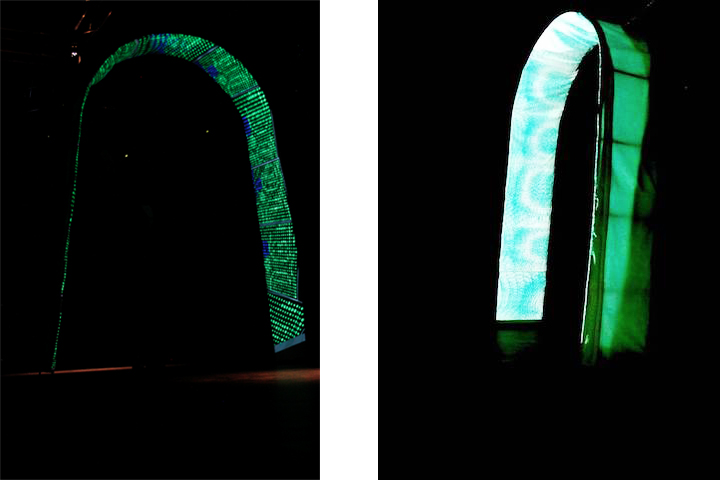

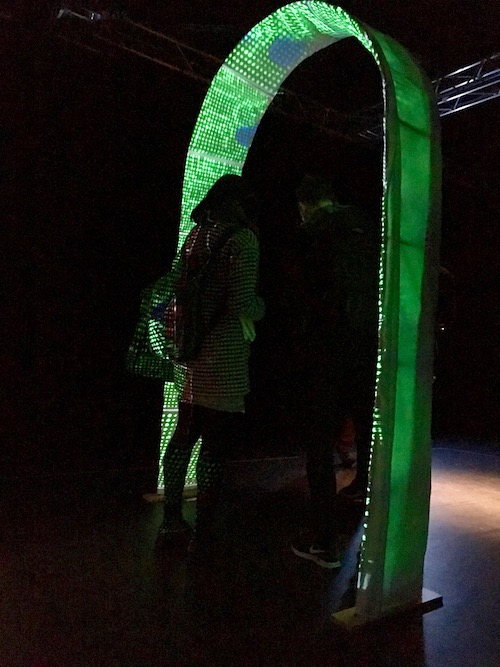

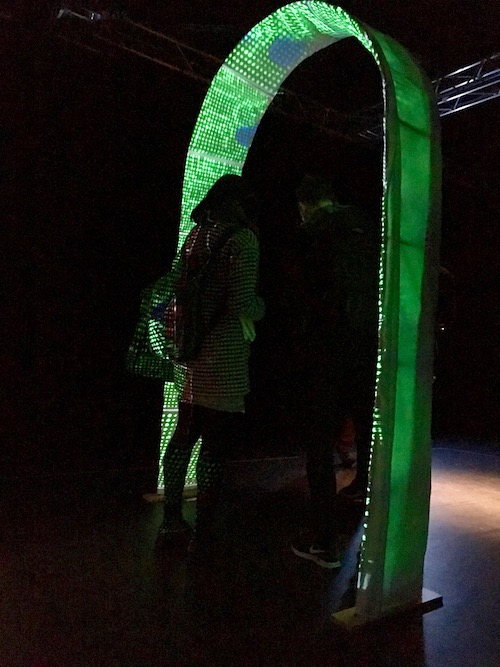

Sparkle is a participatory and interactive installation that challenges our perceptions and enhances the richness of the view through places and emotions. The installation takes the form of a large arch which one long cloth goes through the arch to project the animation on to it. The program switches between two animations that are both sets on a timer. The piece allows the guests to control the animation by using an iPhone that sends OSC messages to the program, which enables the animation to move according to their movements.

Audience

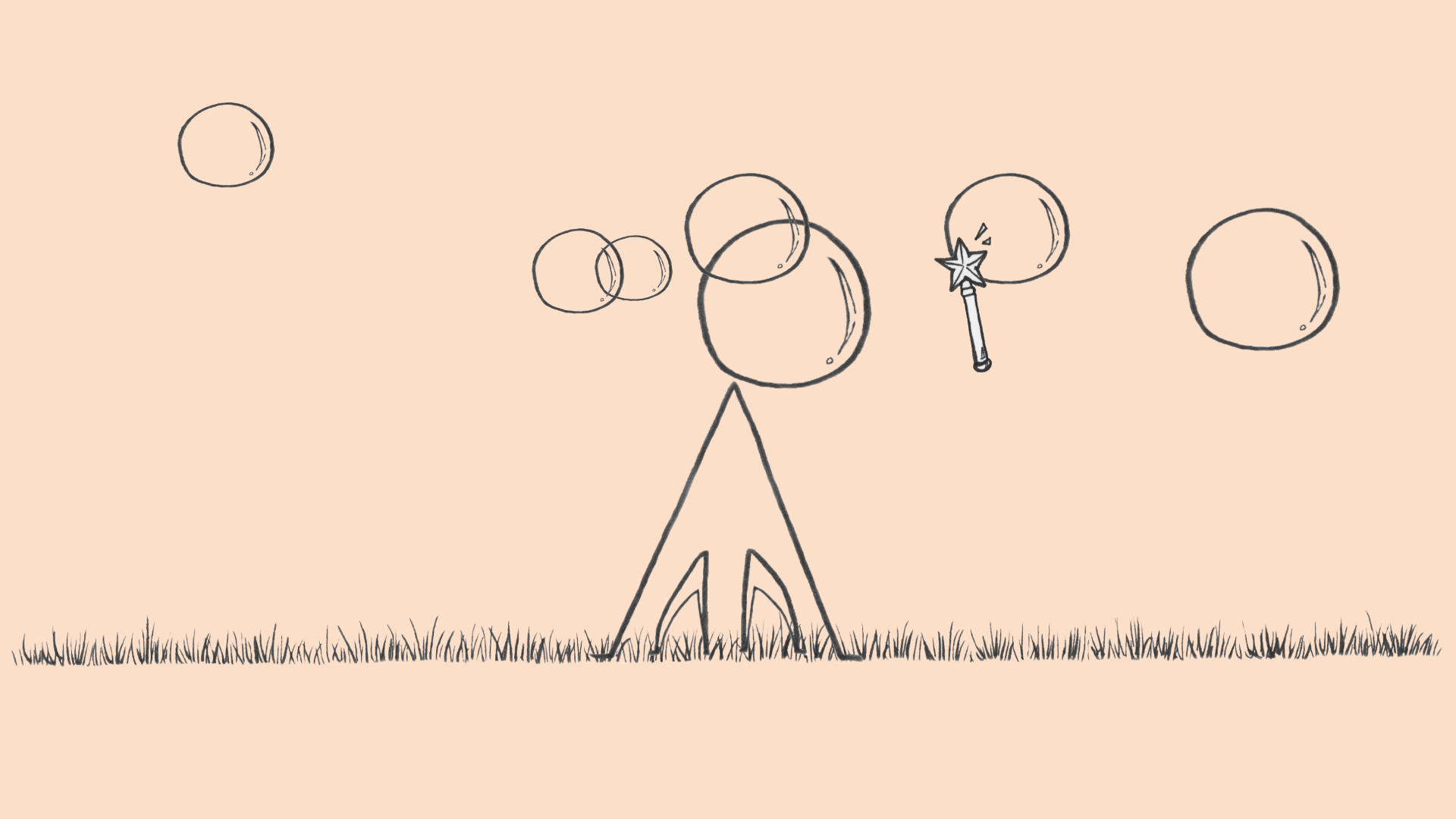

When people consider programming, they tend to visualise fundamental of computing such as algorithms and creating complex usage programs. However, with this project, I wanted to show something simple yet interactive to deliver a message to the viewers that programming does not have to be in a complicated code to show something beautiful. The project mainly focuses on creating animations that are futuristic and optical illusion inspired animation. The codes for the animations are quite simple yet interesting to look at which I was able to accomplish the concept of keeping the code simple yet fascinating.

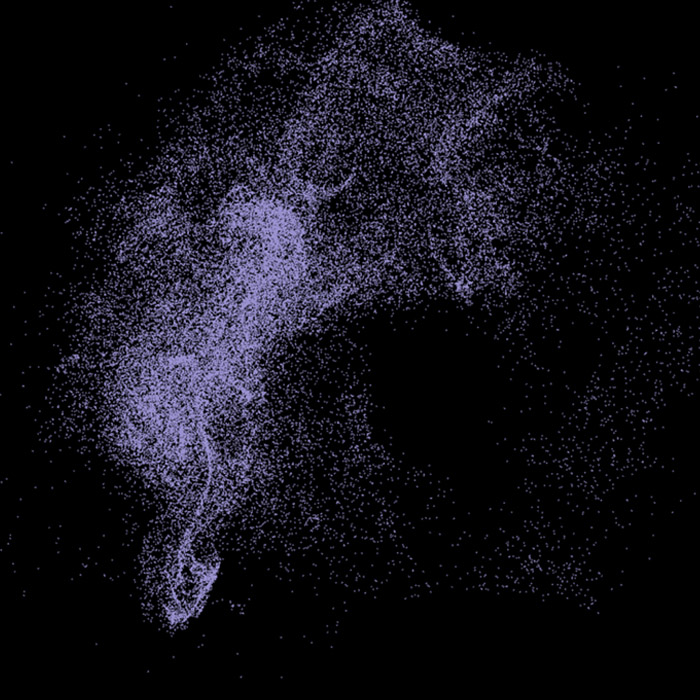

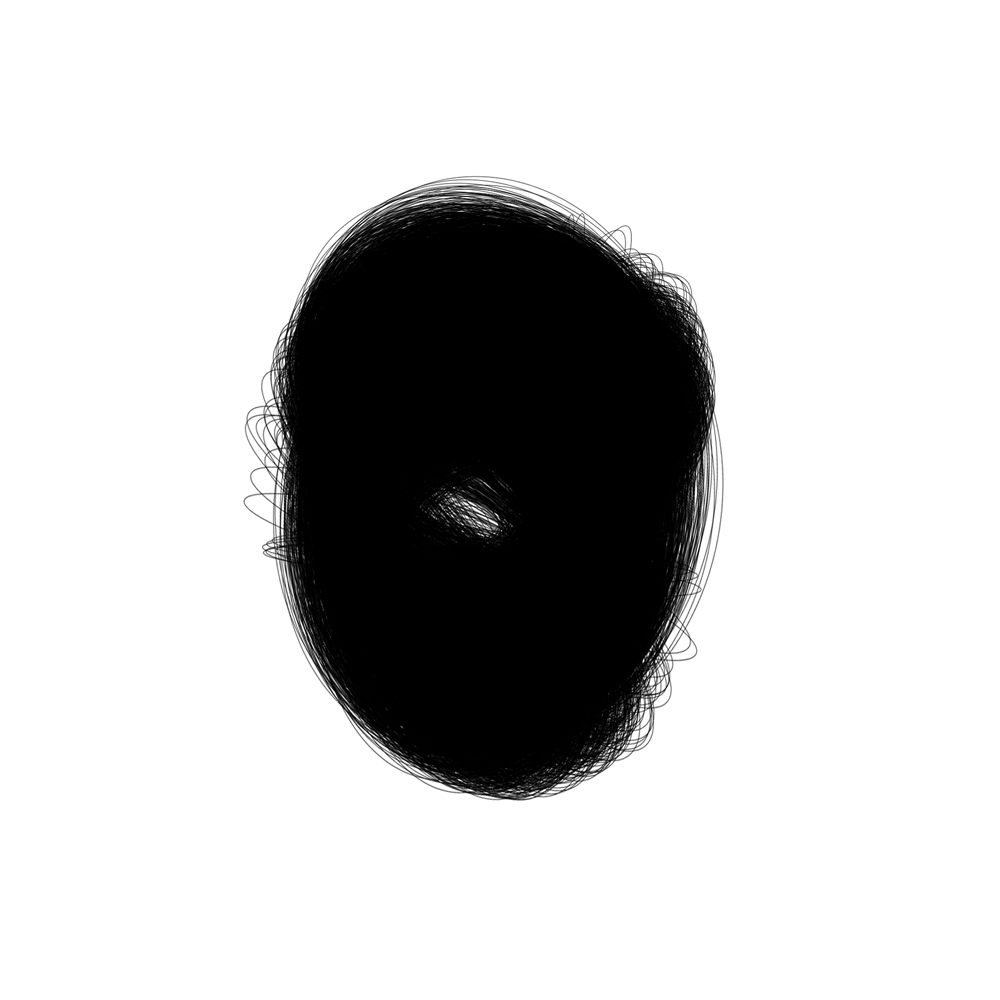

The intention of the installation was to achieve the guests to walk through the arch with a confusion of what the animation is telling. While they play around with the motion sensor, they discover the meaning and purpose of the work. I desired the viewers to be attached to my work such as taking pictures, looking at the animations in detail and touch the arch itself to see how it is projected what it is projected on. It was interesting to see people observing the animation very closely as you could literally see pixels moving around due to the projection

Background Research

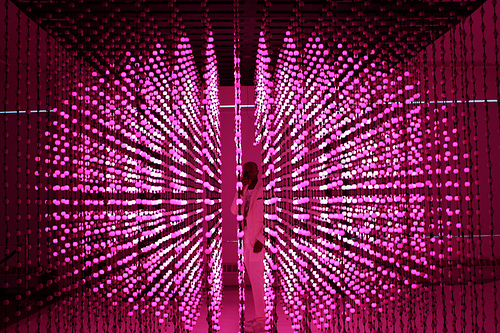

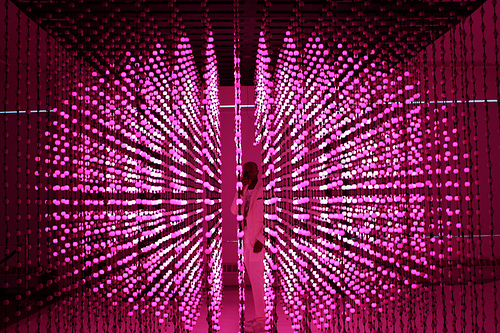

Having an interest in interactive visual art, I came across an artist called Muti Randolph's light installation "Deep Screen" at Creators Project in New York. The piece shows 3D display of 6144 pixels with over 6 million different colours. The content includes 8 different scenes and it reacts to people's movements. The animations can change once people get inside the installation, therefore, it sensors visitors actions. His concept of involving the audience into the piece is very similar to my project, as he likes to see their facial expression such as surprised, excited or confused. He is also keen to create a very immersive environment so the audience can feel it and see it from all phases.

"Deep Screen"

Having to use projection mapping in order to display the animations onto the arch, I was festinated by 'Lighting the Sails of the Sydney Opera House'. Vivid LIVE design collective Universal Everything illustrates the sails of the Sydney Opera House using a mix of projection mapping, hand drawings and CGI illustration. The performance includes sections of the animations where it drips off the building and it climbs up the building that flies around inside and explores the shape and curves to bounce around inside. The performance challenges people's preconceptions of the building and how they have seen it before and hopefully, after the show people will see it in a new light.

'Lighting the Sails of the Sydney Opera House'

I was enthused to project animation through an object. I came across this idea when I visited Tokyo Disney Sea this year. Using many visual effects such as Disney animations were projected onto spray of water to perform the parade. I was mainly attracted to the performance where Disney characters were shown on to enormous sphere stand. It made possible for the animations to be shown at 360 ° which created the atmosphere of everybody enabled to view the parade disregarding your position of scenery. This helped me decide to use some sort of object but rather in a smaller scale for my project to create a sight where audiences can view my work from any angle.

Tokyo Disney Sea parade "fantasmic!"

Creative Process and aesthetic choices

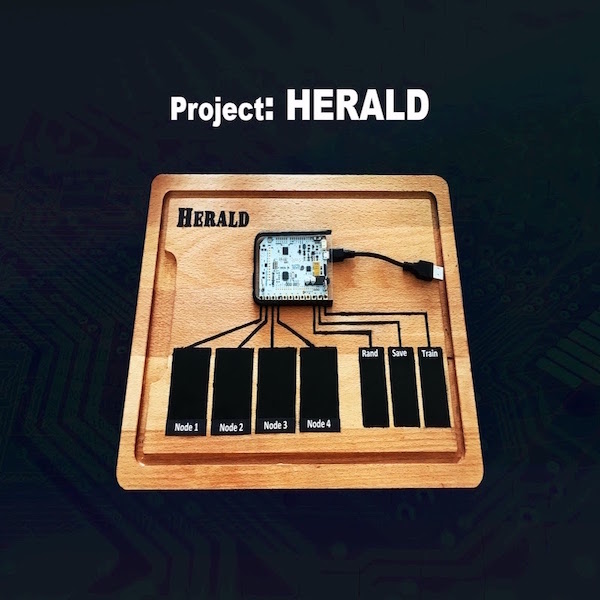

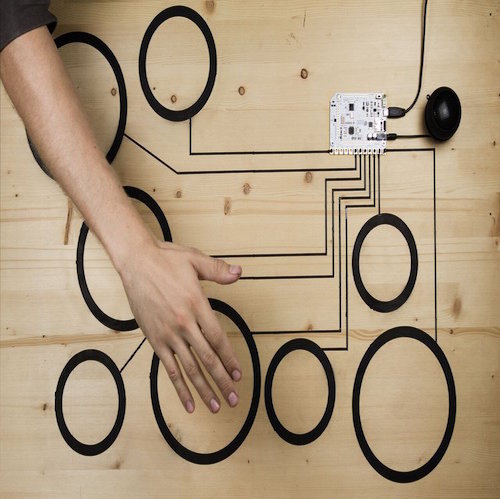

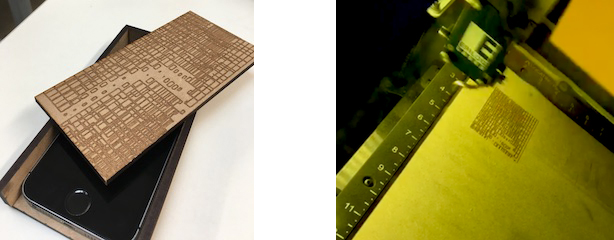

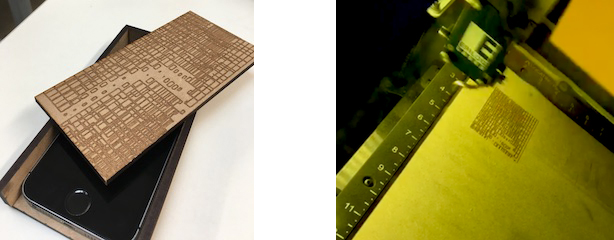

The process behind the decisions of creating the project was to definitely involve something with the sensor. My original idea was to create a box with a sensor inside that interacts with Bluetooth sensor and when the Bluetooth attracts animation plays. I researched on Bluetooth sensor called Estimos, Beacon which I thought it will be perfect for the exhibition. However, the Estimos itself needed more improvement in order to be used within OpenFrameworks therefore, abandoned the idea. I have then introduced to GyrOSC an iOS app that can send motion sensors over the local wireless network to any OSC capable host application. It can control animations with the device built-in gyroscope, accelerometer, and compass. I was able to connect the app by using OSC receive example from OpenFrameworks, which created a port. After setting the app, I created few animations that interact with mouse movement to start off with then implement the code so that the sensor is working with the animations. It was challenging to even move the animation using GyrOSC as I needed to input all the values that might be required. Despite the struggles, it was able to interact by gradually adjusting the values. I have also created a wood case for the iPhone. The pattern was engraved by using the laser cutter and the pattern was from one of the animation.

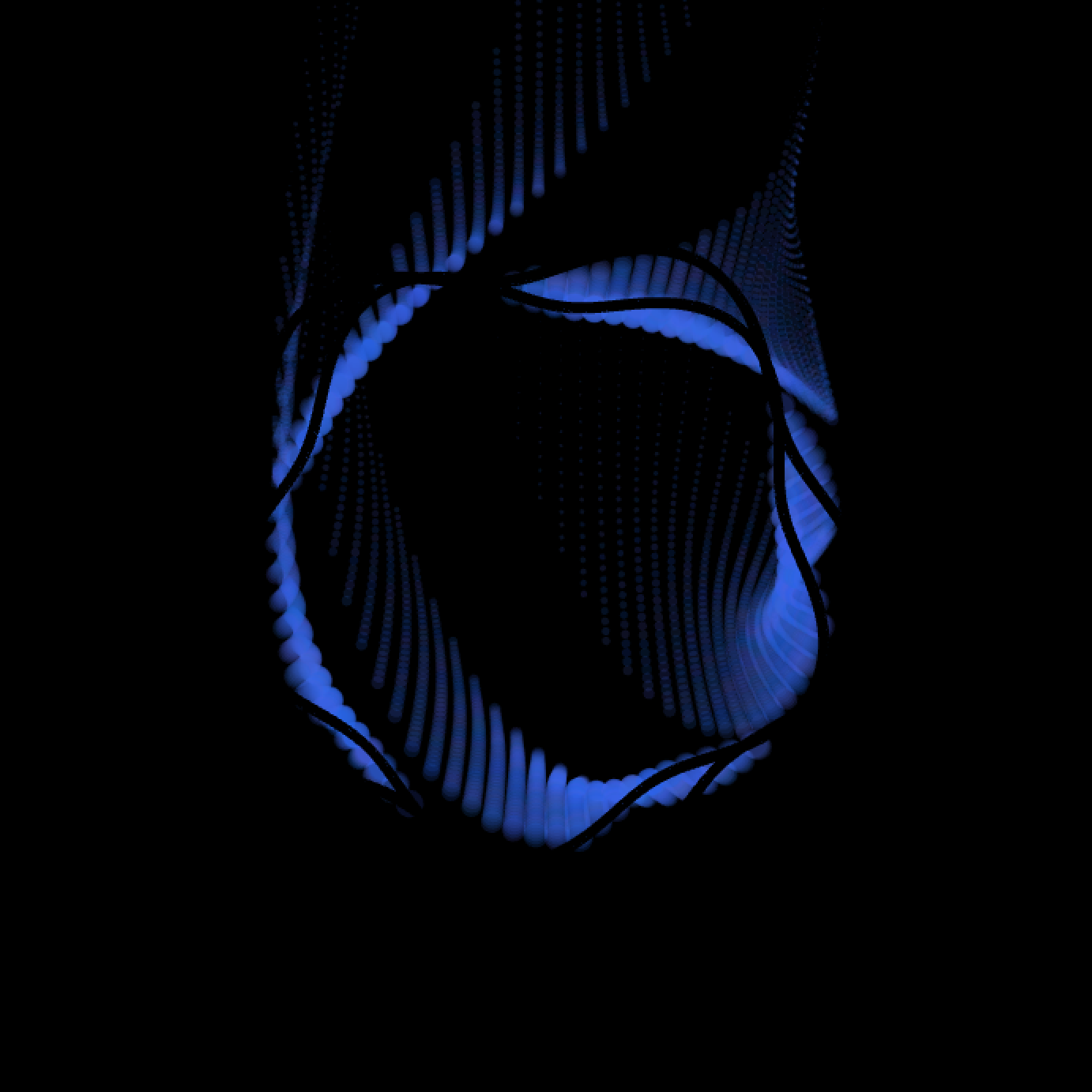

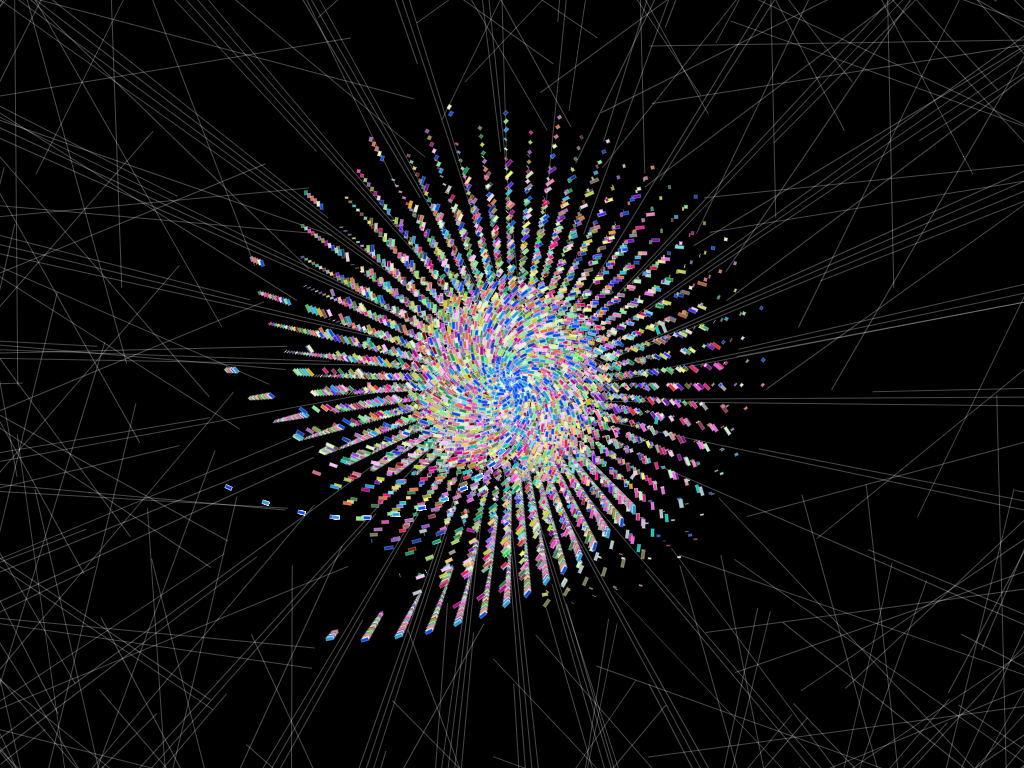

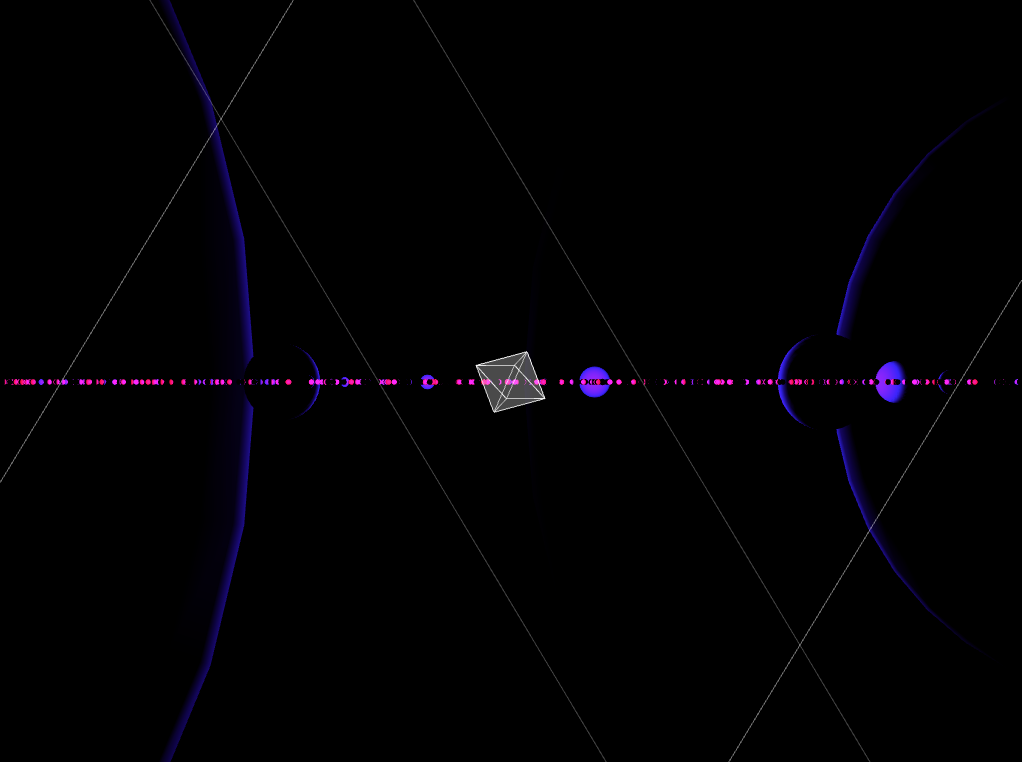

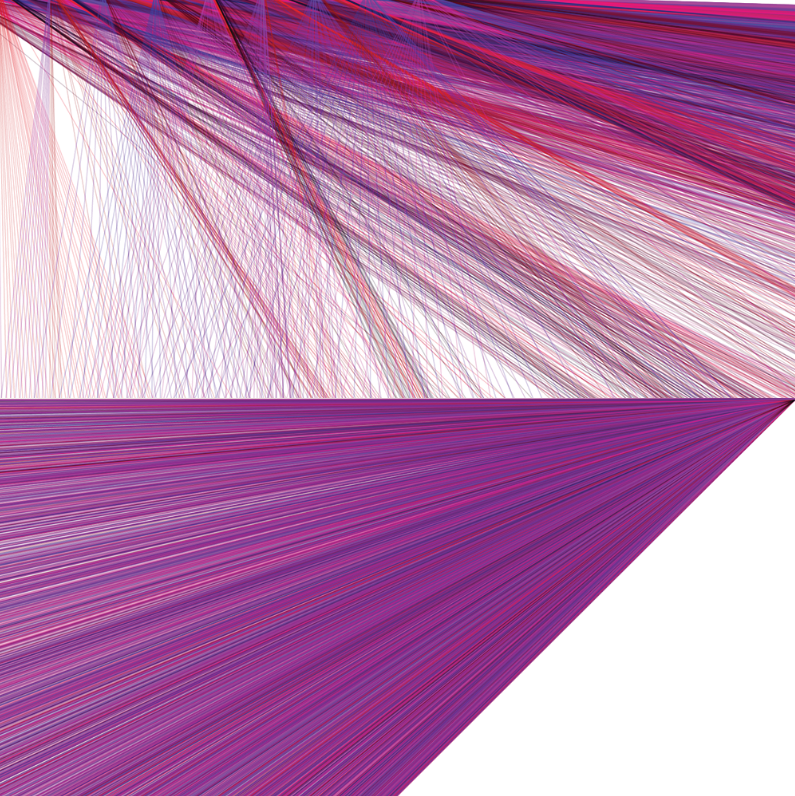

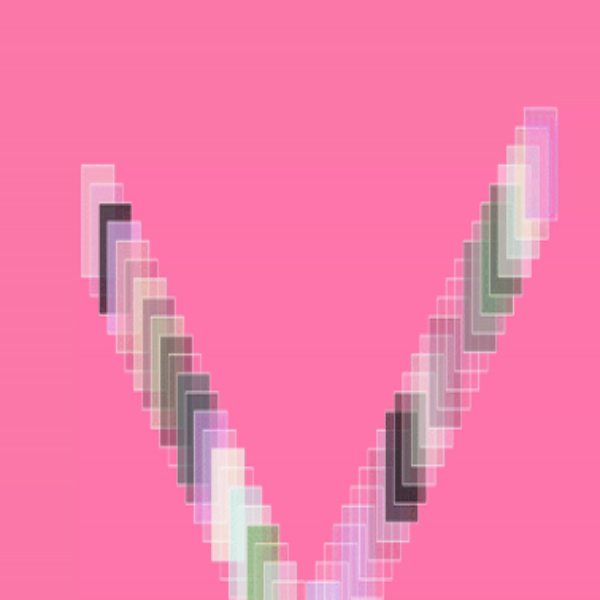

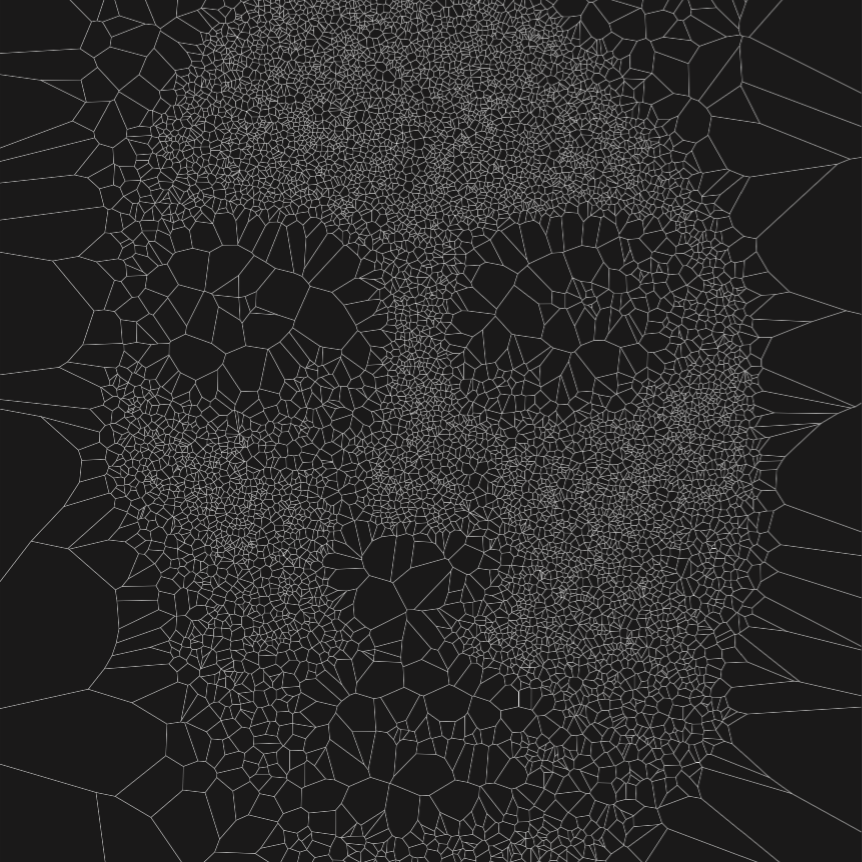

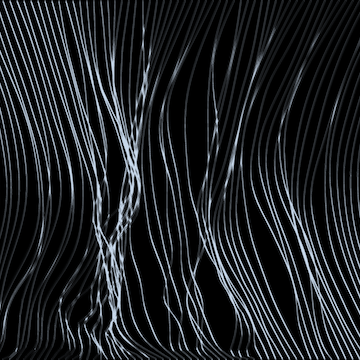

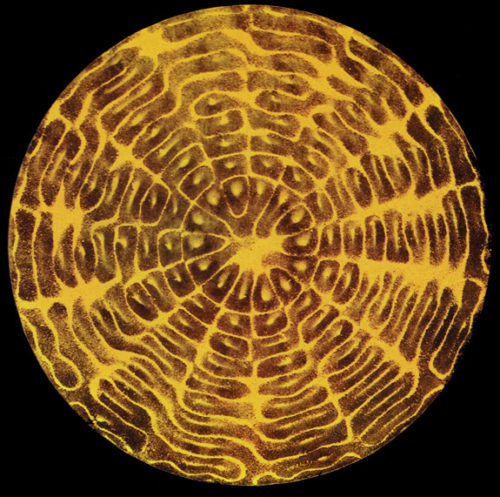

The decisions behind chosen animations are to create an illusion of infinity. One of the animations I created gave an impression of an eye and the other one created a mood of matrix/ technology. For the installation, I made an arch where the animation is mapped inside the arch. The thought for choosing an arch as the display was to produce an atmosphere for the viewer's entrance to technology. An entrance to technology where anything is possible and exciting.

Process of making iPhone box

Problems and Build

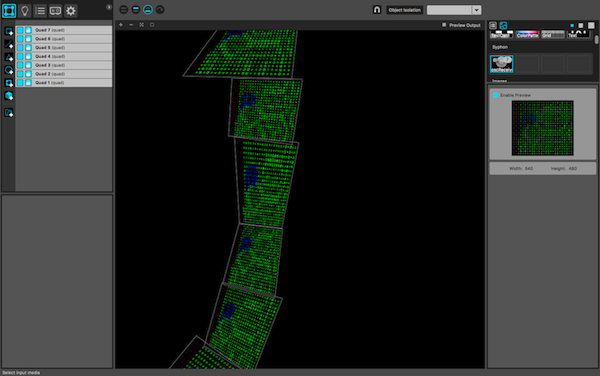

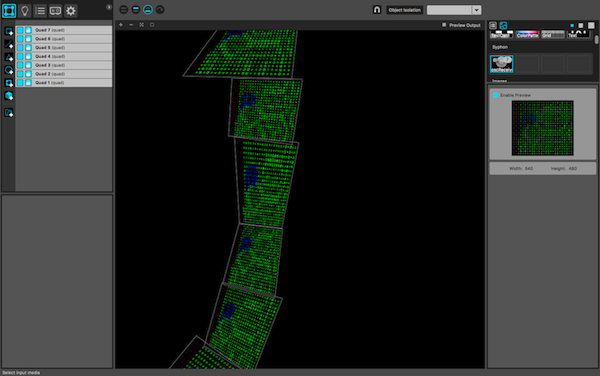

The problem I have encountered was mainly with connecting GyrOSC to OSC receive. I found it really difficult to understand the concept of how OSC sends and receives messages. Working with local network Eduroam was painful as many people are connected to it, therefore, it kept on disrupting my program. The program crashed once due to the network during the exhibition however it was recompiled and it ran perfectly fine after. Also, mapping the animation onto the arch was very problematic as I was trying to map it with an Addon called ofxMtlMapping2D, which made the mapping process very hard. So, I decided to use ofxSyphon to send the animation to mapping software called MadMapper. Sending the animation through Syphon ruins the quality of animations, it was very obvious to see pixels although people found interesting to see pixels moving across. It worked well, however, if I was to map something in the future, I will program it manually by using texture mapping to make the mapping process more accurate.

Mapping process

Evaluation

Although working with sensors are challenging, I was able to create an installation that is a big presence in the dark room. I believe I was able to accomplish most ambitions I have set myself. The viewers were very eager to understand my piece and how I built it, which I was very pleased of as it proves that they were interested in my work. It was very nice to see the viewers observing and discussing my work between themselves also, taking photos and videos to keep in their memory. I was very satisfied to see the animations projected very clearly even though the projection surface was a fabric. If my programming skills were advanced, I would put more effort in a sensor such as, if the sensor were moved vigorously the animation changes instead of using a timer.

Gitlab Repository

http://hsawa001@gitlab.doc.gold.ac.uk/hsawa001/Creative-project-Exhibition.git

References

[1] GyrOSC http://www.bitshapesoftware.com/instruments/gyrosc/

[2] MadMapper http://www.madmapper.com/

[3] OpenFrameworks Addons http://ofxaddons.com/categories

[4] ofxSyphon example https://github.com/astellato/ofxSyphon